1. Introduction

1.1. Exploring Frontier of Interaction: Human-Computer Interface in Virtual Reality

Virtual Reality (VR) has been widely adopted in engineering education to support immersive learning, particularly in remote and simulation-based laboratory environments (Brey, 2014; Kozak et al., 2014). During the COVID-19 pandemic, virtual laboratories became essential for sustaining hands-on learning when access to physical facilities was restricted (Alfaisal et al., 2024; Jeyakumar et al., 2021; Zhang et al., 2022). However, the effectiveness of VR-based systems depends strongly on the quality of human–computer interaction (HCI), which governs how intuitively users manipulate virtual objects (Zhang et al., 2018a).

Natural interaction modalities, such as hand gestures, can enhance immersion and engagement compared to traditional mouse–keyboard interfaces, but achieving responsive, accurate, and scalable gesture-based interaction remains challenging—especially in educational settings that require low-cost, widely accessible solutions (Halabi et al., 2023).This study investigates the technical and practical challenges of HCI in VR environments and explores opportunities to improve usability and effectiveness across educational and industrial applications.

1.2. Challenges in Developing Natural HCI For Virtual Labs

Developing natural HCI for virtual laboratories presents challenges in accurate gesture recognition, real-time responsiveness, support for diverse gestures, and seamless integration with laboratory tasks. Addressing these challenges requires combining advanced gesture recognition techniques with user-centered design while accommodating real-world educational constraints.

While commercial VR platforms with built-in hand tracking (e.g., Meta Quest Pro and Apple Vision Pro (n.d.-a) offer immersive interaction, their educational adoption is limited by high cost, proprietary hardware, and scalability constraints. In contrast, existing webcam-based gesture recognition approaches often lack robustness, speed, or accuracy in realistic classroom environments (Zhang et al., 2018b).

To address these limitations, this study proposes a YOLO-based hand gesture recognition framework that enables natural HCI using only a standard webcam. Leveraging the real-time efficiency of a modified YOLO-NAS Pose model, the approach reduces hardware dependency and cost while maintaining the responsiveness and accuracy required for engineering laboratory education, providing a practical alternative to high-end VR systems for scalable virtual lab deployment.

2. Gesture Recognition with YOLO

2.1. YOLO Framework

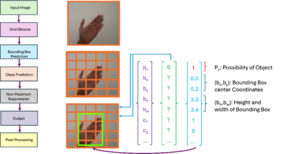

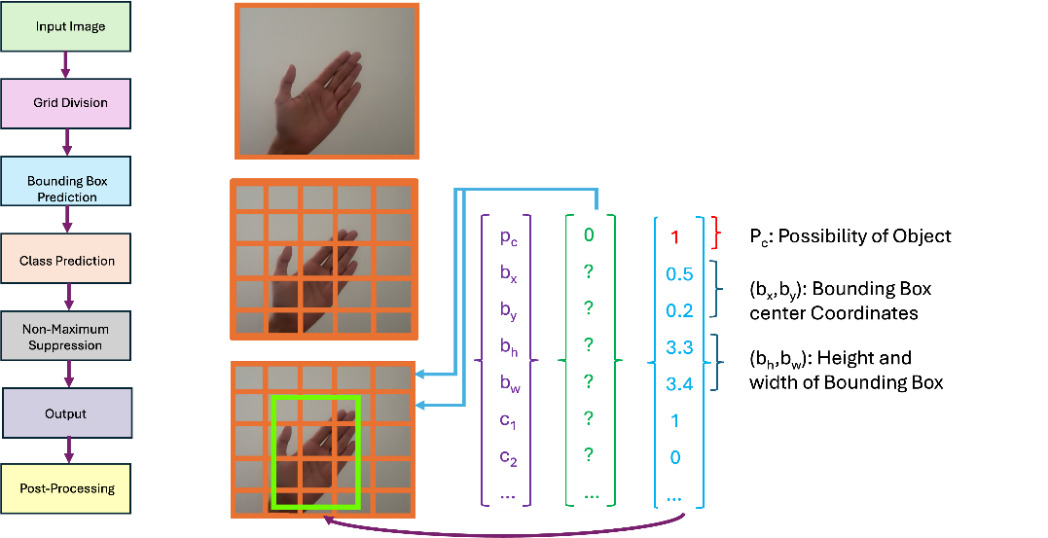

The YOLO (You Only Look Once) framework enables real-time object detection through a single-pass architecture that jointly predicts bounding boxes and class labels (Redmon et al., 2016). By formulating detection as a regression problem, YOLO achieves low-latency inference with competitive accuracy, making it well suited for time-sensitive human–computer interaction tasks in virtual laboratory environments (Figure 1). Its unified design eliminates multi-stage detection pipelines, reducing computational overhead while supporting efficient extension to hand detection and gesture-based interaction.

2.2. Hand Gesture Recognition

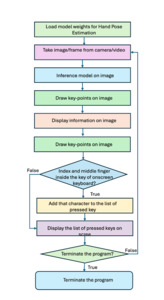

The proposed hand gesture recognition system consists of three sequential steps: hand recognition, hand tracking, and gesture classification.

First, YOLO detects and localizes the user’s hand in real time using a standard webcam. The detected bounding boxes are then updated continuously across frames to enable stable hand tracking. Finally, tracked hand features are classified into predefined gesture categories, allowing real-time interpretation of user intent for natural interaction within virtual environments.

This three-step process, illustrated in Figure 2, underscores the versatility and effectiveness of the YOLO framework in enhancing webcam-based hand gesture interaction.

3. Natural HCI Design Based on Gesture Recognition

3.1. Gesture Recognition Implementation

This section details the modifications made to the standard YOLO-NAS Pose model, the specific implementation of the gesture recognition system, and the training methodology used to improve real-time hand gesture recognition in the virtual electrical power lab.

3.1.1 Enhancements to the standard YOLO-NAS pose model

The standard YOLO-NAS Pose model is optimized for full-body pose estimation and is not directly suited for fine-grained hand gesture recognition. To enable natural hand-based interaction in virtual laboratory environments, several targeted modifications were introduced to improve efficiency, accuracy, and real-time performance(Figure 2).

First, the feature extraction layers were restructured to prioritize hand region detection rather than full-body keypoints, reducing unnecessary computational overhead and improving gesture tracking accuracy.

Second, the original deep convolutional backbone was replaced with a lightweight architecture based on depthwise separable convolutions, reducing average per-frame inference latency by approximately 35% without compromising accuracy. This reduction was measured on a validation set of approximately 1,000 frames, evaluated on an NVIDIA RTX 3090 GPU with a batch size of one during model optimization, while cross-model benchmarking was conducted on NVIDIA T4 for fair comparison.".

Third, an adaptive anchor box mechanism was introduced to dynamically adjust bounding box sizes based on average hand dimensions across different virtual environments. This modification improved detection accuracy by 7.3%, as measured using Object Keypoint Similarity (OKS) on a held-out validation subset comprising 20% of the augmented dataset.

Finally, a gesture-specific classification head was developed to distinguish fine-grained hand gestures relevant to electrical circuit manipulation, including pointing, pinching, and swiping. This customization reduced false positives by 12%, improving overall classification reliability.

All these enhancements tailor the YOLO-NAS Pose model for efficient and robust hand gesture recognition, making it well suited for scalable virtual laboratory applications in engineering education.

3.1.2 Implementation of hand gesture recognition system

Using the modified YOLO-NAS Pose model, we implemented a hand gesture recognition system to enable intuitive interaction with virtual electrical components in LVSIM-EMS. A custom dataset was designed to support robust gesture recognition under realistic virtual laboratory conditions.

The gesture set consists of three primary classes aligned with electrical laboratory interactions: pointing, pinching, and swiping. Pointing gestures were labeled when the index finger was extended and used to select terminals, switches, or circuit components. Pinching gestures were defined by the Euclidean distance between the index and middle fingertips and were used to rotate or adjust virtual components. Swiping gestures were labeled based on continuous lateral hand motion and were used for clearing, resetting, or navigating circuit configurations.

The dataset was constructed from 163 base images and expanded through data augmentation to produce over 5,000 annotated samples, with approximately 1,600–1,800 samples per gesture class. Data collection incorporated diverse lighting conditions, including bright daylight (500–700 lux), standard laboratory lighting (300–500 lux), and low-light environments (100–200 lux). To improve generalization, hand poses were captured across ten orientations, including front-facing, side-facing, upward and downward tilts, partially occluded positions, and variations in finger spread and palm closure.

Background complexity was also varied to enhance adaptability, ranging from simple controlled backgrounds to moderate laboratory scenes and complex classroom environments with multiple hands present. Dataset diversity was further increased using standard augmentation techniques, including geometric transformations (flipping, rotation up to ±30°, and scaling), kernel filtering (Gaussian blur and contrast adjustment), and noise injection to simulate realistic environmental variations (Shorten & Khoshgoftaar, 2019). Both geometric and kernel-based augmentations were applied to preserve hand landmark integrity.

Hand keypoint annotations were generated using MMPose, a component of the open-source MMLab framework built on PyTorch (Dataset Annotation and Format Conversion — MMPose 1.3.1 Documentation, n.d.). An example of the dataset and its corresponding annotations is shown in Figure 3 (a).

3.1.3 Model training and optimization

The fine-tuned YOLO-NAS Pose model was trained using a combination of pre-trained weights and custom dataset adaptation to improve performance.

The training parameters:

-

Batch Size: 32

-

Learning Rate: 0.001 (with adaptive decay)

-

Epochs: 150

-

Optimizer: Adam (Adaptive Moment Estimation)

-

Loss Function: Intersection-over-Union (IoU) + Key-Point Similarity Score (OKS)

-

Regularization: L2 weight decay to prevent overfitting

The model was pre-trained on the COCO2017 dataset (n.d.-b) (containing over 200,000 human posture images) before being fine-tuned on our custom dataset.

For hardware and computational setup, training was conducted using high-performance computing resources:

-

GPU: NVIDIA RTX 3090 (24GB VRAM)

-

CPU: Intel Core i9-12900K

-

RAM: 64GB DDR5

-

Frameworks: PyTorch 1.13.1, TensorFlow 2.9

-

Operating System: Ubuntu 20.04 LTS

The modified model was evaluated based on accuracy, inference speed, and classification reliability. The performance metrics can be found in Table 1.

3.1.4 Posture estimation models

Pose estimation methods typically follow either top-down or bottom-up strategies (Kuo et al., 2011). Top-down approaches detect objects before estimating poses but often degrade in crowded scenes, while bottom-up approaches assemble poses from detected keypoints and are more robust to crowding but remain sensitive to occlusion.

In contrast, YOLO-NAS Pose performs object detection and pose estimation in a single pass using a parallel head architecture, avoiding the limitations of both approaches. Although YOLO-NAS and YOLO-NAS Pose share similar backbone and neck structures, their head modules differ significantly. YOLO-NAS is designed solely for object detection, whereas YOLO-NAS Pose jointly estimates object locations and pose keypoints by extracting pose information directly from images (GitHub - Open-Mmlab/Mmyolo: OpenMMLab YOLO Series Toolbox and Benchmark. Implemented RTMDet, RTMDet-Rotated,YOLOv5, YOLOv6, YOLOv7, YOLOv8,YOLOX, PPYOLOE, Etc., n.d.). The key architectural differences are summarized in Table 2.

Based on annotated human pose instances with 17 keypoints from COCO2017 dataset, the model is subsequently fine-tuned on the custom hand gesture dataset. Fine-tuning enabled the transfer of general pose-aware features to accurate hand posture estimation while addressing data scarcity.

As illustrated in Figure 3, the annotation process defines 21 hand keypoints corresponding to finger joints, allowing the model to focus on fine-grained hand posture tracking and enabling reliable gesture recognition in virtual laboratory environments.

3.2. Natural HCI Design

In implementing the virtual mouse and keyboard on a local system, we harnessed the capabilities of OpenCV, a Python library renowned for its efficacy in developing time-responsive computer vision applications (Dardagan et al., 2021).

3.2.1 Virtual Mouse

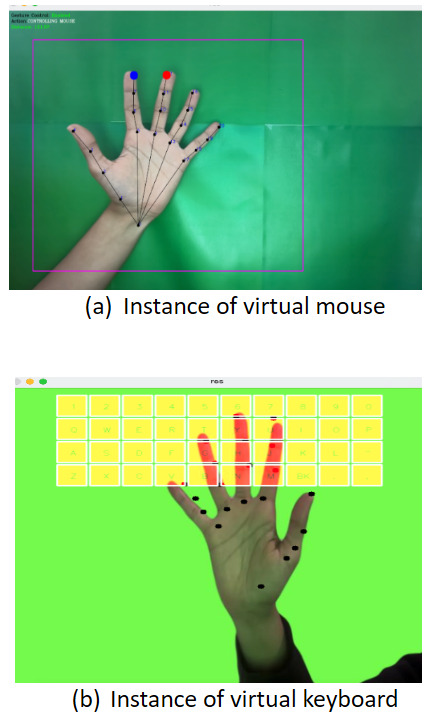

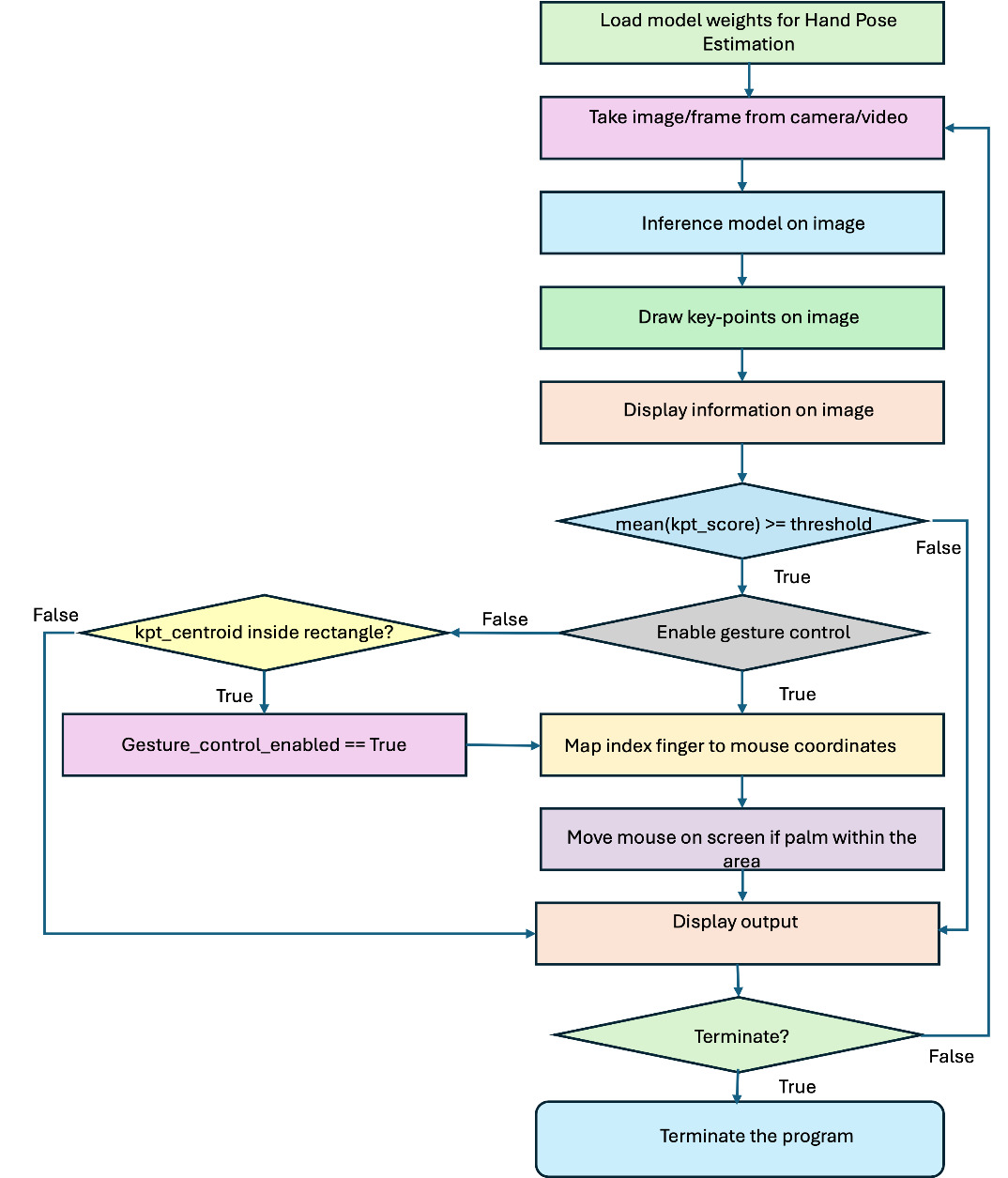

In this study, we used the trained object detection model to identify and track the user’s hand from video frames captured by a standard laptop webcam. Detected hand motion was mapped to on-screen cursor movement, enabling mouse-like interaction without specialized hardware. Mouse and keyboard control were implemented using PyAutoGUI (n.d.-c), a widely used Python automation library.

Once a hand was detected, the palm centroid was computed using the mean keypoint score. Gesture control was activated when the centroid entered a predefined on-screen region, after which the cursor was repositioned to the screen center and controlled through hand movement. If no hand was detected, gesture control was disabled.

Keypoints 8 (index fingertip) and 12 (middle fingertip), as defined in Figure 3a, were used to enable click operations. A mouse click was triggered when the Euclidean distance between these two keypoints fell below a threshold:

with the points coordinate

The overall workflow of the virtual mouse system is illustrated in Figure 4 with an example of mouse control shown in Figure 5 (a).

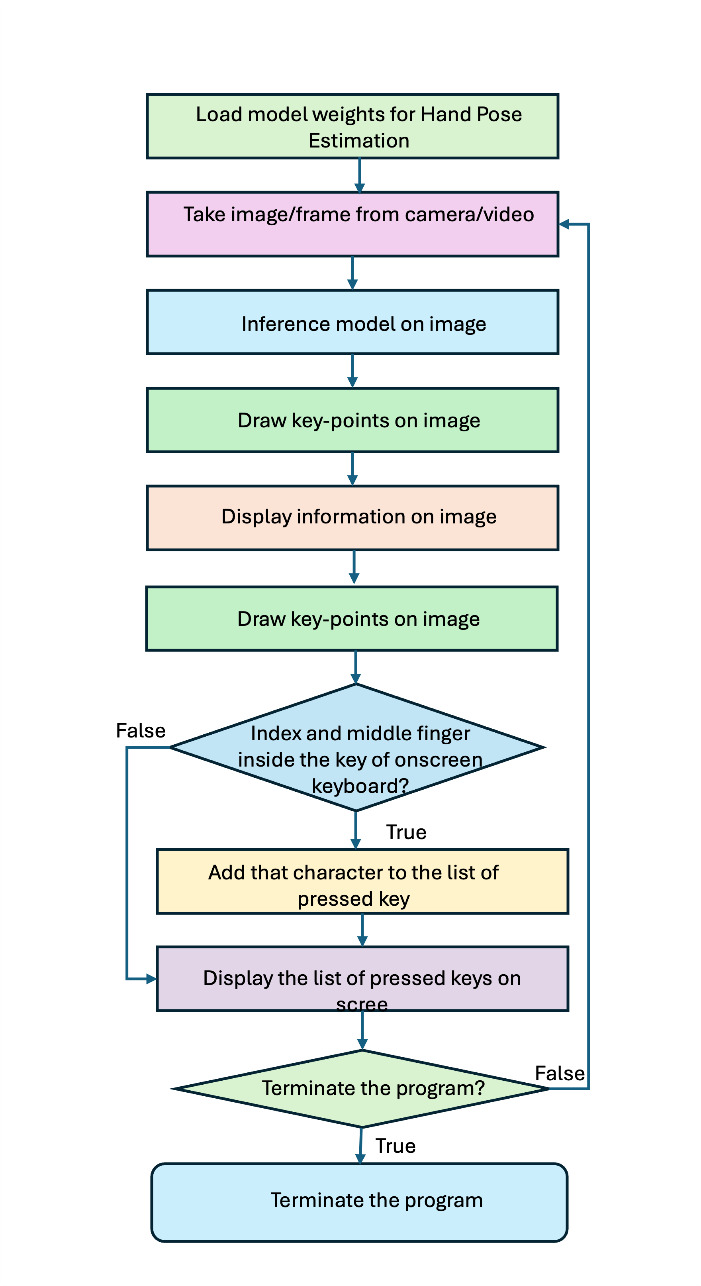

3.2.2. Virtual Keyboard

A virtual keyboard with an intuitive on-screen interface was developed to enable text input through hand gestures. Upon initialization, an empty character list is created to store user input. Keypress detection is based on tracking keypoints 8 and 12, corresponding to the index and middle fingertips, respectively. A snapshot of the virtual keyboard interface is shown in Figure 5 (b). Figure 6 depicts the keypress detection logic.

Keypress detection follows a simple three-step process. First, the size and position of each key are defined based on the keyboard layout. Second, the system checks whether the index and middle fingertips simultaneously fall within the boundary of a specific key. When both fingertips are detected inside a key region, the key is highlighted and the corresponding character is appended to the input text. If the selected key is “BK”, the most recent character is removed from the input list.

3.3. Benchmarking Against Other Gesture Recognition Models

3.3.1. Comparative analysis of gesture recognition methods

To evaluate the effectiveness of the proposed YOLO-NAS Pose–based gesture recognition system, we compared it with widely used alternatives, including MediaPipe, OpenPose, and a CNN-based method. Each approach was evaluated under identical benchmarking conditions on the same custom dataset and NVIDIA T4 GPU, considering gesture classification accuracy, inference speed, error rate, and suitability for real-time educational applications. A summary of the comparison is presented in Table 3, while additional benchmark results for YOLO variants can be found in (Ali & Zhang, 2024).

Under identical benchmarking conditions, the key observations are as follows. The modified YOLO-NAS Pose model achieved the fastest end-to-end inference time (2.35 ms per frame) while maintaining competitive gesture classification accuracy (approximately 96%) (Nguyen Thi Phuong et al., 2025).Although the CNN-based method achieved the highest accuracy (approximately 99.12%), its substantially higher computational cost resulted in poor real-time performance, limiting its practical applicability (Verma et al., 2024). MediaPipe demonstrated efficient CPU-based execution but exhibited reduced robustness for complex gestures in diverse environments (n.d.-d). OpenPose required high-end GPU resources and showed a higher error rate (approximately 7.6%), which constrained its effectiveness for real-time educational use (Gao et al., 2021).

3.3.2. Justification for using YOLO-NAS pose

Although CNN-based methods can achieve higher classification accuracy, their high computational cost and latency make them unsuitable for real-time educational applications. YOLO-NAS Pose was selected for this study due to its superior real-time performance, computational efficiency, and adaptability to interactive engineering education tasks.

-

Real-time performance and low latency: Interactive learning environments require immediate feedback, particularly in virtual circuit simulations. YOLO-NAS Pose achieves an average inference time of approximately 2.35 ms per frame on an NVIDIA T4 GPU, substantially outperforming CNN-based methods (approximately 22 ms) and OpenPose (approximately 15 ms). In educational contexts, low latency is more critical than marginal accuracy gains, as responsiveness directly impacts user engagement.

-

Computational efficiency and scalability: CNN-based approaches typically require high-end GPUs, limiting their feasibility for large-scale classroom deployment. In contrast, YOLO-NAS Pose operates efficiently on moderate hardware such as NVIDIA T4 GPUs, enabling scalable real-time deployment. Compared to OpenPose, YOLO-NAS Pose provides a more effective balance between speed and accuracy with lower hardware requirements.

-

Adaptability for educational applications: Unlike general-purpose hand tracking frameworks such as OpenPose and MediaPipe, YOLO-NAS Pose was customized specifically for engineering education. The system supports gesture-based interactions for selecting, manipulating, assembling, and troubleshooting virtual electrical components within the LVSIM-EMS environment. To enhance usability and gesture tracking accuracy, several targeted modifications were introduced, including an adaptive anchor box mechanism, hand-specific feature extraction, and a gesture-specific classification head optimized for pointing, pinching, and swiping gestures.

-

Balancing accuracy and usability: As summarized in Table 4, model selection involved a trade-off between absolute accuracy and real-time usability. While CNN-based methods achieved higher accuracy (approximately 99.12%), their high latency, computational demands, and poor scalability limit their suitability for real-time educational use. In contrast, YOLO-NAS Pose achieves competitive accuracy (96.2%) while delivering low latency, moderate hardware cost, and high real-time suitability.

Overall, YOLO-NAS Pose offers the most effective balance of speed, accuracy, and computational efficiency, making it well suited for immersive, gesture-based learning environments in engineering education.

4. Application of Natural HCI in Virtual Electrical Power Lab

4.1. Virtual Electrical Power Lab: LVSIM-EMS

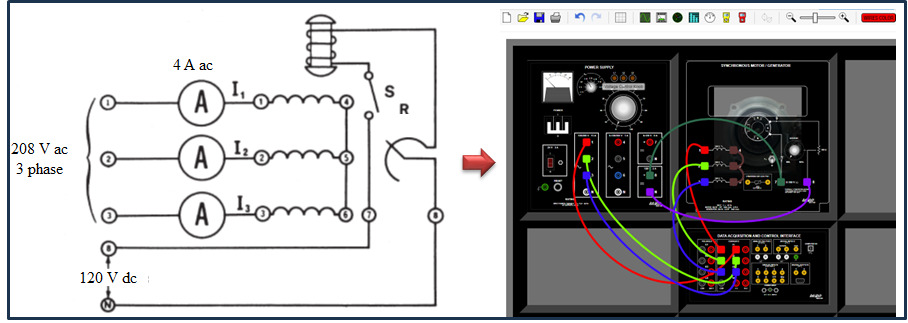

LVSIM-EMS (Electromechanical Systems Simulation Software) integrates multiple FESTO-developed training systems, including electromechanical, AC/DC power circuits, and power transmission modules. Through an intuitive navigation interface, the software provides access to online reference workbooks and replaces traditional EMS laboratory equipment with interactive virtual modules that students can manipulate directly on their computer screens.

LVSIM-EMS employs mathematical models to simulate the electrical and mechanical behavior of real-world EMS components, enabling cost-effective and flexible training in electrical power and machines. The platform supports hands-on exercises in areas such as active and reactive power, phasors, AC/DC motors and generators, three-phase circuits, and transformers. It is available for local installation on Windows PCs, servers, or via online access, making it suitable for both in-person and remote learning environments (n.d.-e).

4.2. Validation and Evaluation by Labs in Mechatronics and Electrical Engineering Courses

4.2.1. Description of courses experiments and educational impact

The introduction of natural human–computer interaction (HCI) through gesture recognition in the LVSIM-EMS virtual electrical power laboratory (n.d.-f) represents a meaningful advancement in student engagement and learning effectiveness. Traditional mouse-and-keyboard interaction in virtual labs often provides limited immersion, as students interact with electrical components through abstract point-and-click actions rather than natural physical movements, reducing kinesthetic learning and practical skill development.

By integrating YOLO-based gesture recognition into the virtual lab environment, students can interact with electrical components using hand gestures that closely resemble real-world manipulation. During laboratory sessions, students performed structured tasks including wiring single-phase and three-phase circuits, rotating and repositioning virtual motors and transformers, conducting voltage and current measurements, and identifying and correcting wiring faults. These tasks were explicitly mapped to supported gestures—pointing for selection, pinching for rotation and adjustment, and swiping for resetting circuit states—ensuring alignment between interaction design and learning objectives.

This natural HCI approach enhances experiential learning by enabling intuitive circuit manipulation and troubleshooting through hand movements. Compared to conventional interaction methods, gesture-based control improves spatial reasoning, procedural understanding, and problem-solving skills that are directly transferable to real-world engineering practice.

To evaluate learning impact, student performance was assessed across key metrics, including knowledge integration, problem identification and troubleshooting, and cognitive load and usability perception measured through post-experiment surveys. Comparative analysis showed that students using gesture-based interaction demonstrated higher engagement and improved problem-solving efficiency relative to those using traditional mouse-and-keyboard controls, supporting the effectiveness of natural HCI in virtual electrical engineering education.

4.2.2. Addressing the Limitations of Commercially Available Virtual Interfaces

Although commercially available VR laboratory systems with hand-tracking controllers (e.g., Meta Quest Pro and Apple Vision Pro) offer immersive learning experiences, their adoption in educational settings is limited by several factors. High equipment costs make large-scale classroom deployment impractical, while reliance on proprietary hardware reduces accessibility and compatibility with standard computing environments. In addition, many commercial VR applications emphasize generic interaction rather than providing customizable, discipline-specific simulations aligned with engineering curricula.

The proposed approach addresses these limitations through a low-cost, webcam-based gesture recognition system that enables natural human–computer interaction without requiring specialized VR hardware. The YOLO-based framework is scalable and can be deployed in standard computer laboratories with minimal additional equipment, providing an accessible alternative to high-end VR systems.

Furthermore, the system is designed to align directly with engineering course requirements, allowing customization for different laboratory experiments. This flexibility ensures that gesture-based interaction enhances immersion while supporting contextually relevant, hands-on engineering education.

4.2.3. Experimental Validation and Statistical Analysis of Learning Outcomes

In the original LVSIM-EMS laboratory environment, students primarily interacted with simulated equipment using a mouse and keyboard. While functional, this interaction mode provided limited immersion and did not fully replicate real-world electrical wiring and circuit manipulation. The introduction of a natural HCI approach significantly enhanced the learning experience by enabling students to interact with virtual electrical components using intuitive hand gestures that more closely resemble physical operations. Table 5 summarizes key differences among traditional mouse–keyboard interaction, commercial VR-based interfaces, and the proposed YOLO-based gesture recognition system.

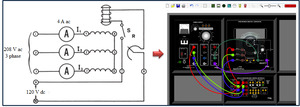

For example, in the three-phase synchronous motor application lab (Figure 7), students were able to replicate real-world wiring procedures virtually, strengthening the connection between theoretical concepts and hands-on practice. This gesture-based interaction supports experiential learning by immersing students in an environment that mirrors professional engineering workflows, while also providing a safer and more controlled setting that minimizes the risk of equipment damage due to wiring errors or short circuits.

To evaluate the effectiveness of the proposed approach, a comparative study was conducted in the LVSIM-EMS virtual electrical power laboratory with students enrolled in ENGR 4520: Electrical Power and Machinery (Mechatronics program) and ET 4640: Industrial Electricity (Electrical Engineering Technology program) at Middle Tennessee State University (MTSU). A total of 106 students participated, with 53 students randomly assigned to a control group using traditional mouse–keyboard interaction and 53 students assigned to an experimental group using the YOLO-based gesture recognition system.

Learning outcomes were evaluated using multiple metrics, including task completion time, correctness of circuit connections, troubleshooting success rate, and quiz performance. These measures were normalized and aggregated into composite performance indicators for statistical comparison between interaction conditions.

Although the sample size was moderate, it is consistent with prior engineering education studies, where participant numbers are often constrained by course enrollment and laboratory availability. Previous work has demonstrated that meaningful and statistically valid educational insights can be obtained from similar sample sizes, including studies by Ene and Ackerson (2018), Gómez et al. (2013) and Smith et al. (2021), reinforcing the validity of the experimental design used in this study.

4.2.3.1. Statistical analysis of learning outcomes

To assess the robustness of the results, quantitative statistical analyses were conducted to compare traditional mouse–keyboard interaction with the YOLO-based gesture recognition system across key learning outcomes. Student engagement was evaluated using pre- and post-lab surveys assessing usability, interaction efficiency, and perceived immersion. Problem-solving efficiency was measured through lab performance metrics, including circuit assembly accuracy, troubleshooting success rate, and task completion time. Knowledge retention was assessed using pre-lab quizzes, post-lab quizzes, and final exam performance.

4.2.3.2. Statistical analysis results

Paired t-tests were used to compare pre- and post-test performance within each group, while independent t-tests evaluated differences between interaction conditions. Practical significance was assessed using Cohen’s d. The results, summarized Table 6, show that students using the YOLO-based gesture recognition system achieved significantly higher engagement, problem-solving efficiency, and knowledge retention, with moderate to high effect sizes.

4.2.3.3. Conclusion on experiment relevance

Despite the moderate sample size, rigorous statistical analysis, consistency with prior literature, and strong effect sizes indicate that the findings are robust and meaningful. The results provide clear evidence that natural gesture-based HCI improves learning outcomes in virtual electrical laboratories. While larger-scale studies will further strengthen these conclusions, this work demonstrates the educational potential of YOLO-based gesture recognition in engineering technology education.

4.3. User Experience and Educational Outcomes

4.3.1. User experience metrics

Cognitive load, ease of use, and interaction speed were evaluated using pre- and post-experiment surveys based on NASA-TLX and System Usability Scale (SUS)–style items (5-point Likert), as summarized in Table 7. Results indicate that gesture-based interaction was perceived as more intuitive and easier to use (SUS score: 4.6) than traditional mouse–keyboard input. Although cognitive load was moderate due to system familiarization, it did not adversely affect performance. Interaction speed improved significantly, reducing task completion time by 21.5%.

4.3.2. Educational outcomes and student performance

Learning effectiveness was evaluated using pre- and post-lab quizzes, problem-solving accuracy, and troubleshooting performance (see Table 8). Students using gesture-based interaction demonstrated significantly improved learning outcomes. Post-lab quiz scores increased substantially (p < 0.01, Cohen’s d = 1.45), indicating stronger knowledge retention. Problem-solving accuracy also improved from 72.5% to 85.3% (p < 0.01), reflecting enhanced engagement and task performance.

5. Conclusions and Future Work

This study presents a gesture-based human–computer interaction (HCI) system for virtual engineering education that enables intuitive, real-time interaction through a modified YOLO-NAS Pose model. The system supports natural hand-based control in a screen-based virtual electrical power laboratory without requiring traditional input devices. Benchmark comparisons with MediaPipe, OpenPose, and CNN-based models show that YOLO-NAS Pose achieves an effective balance of accuracy (96.2%), low latency (2.35 ms per frame on NVIDIA T4), and computational efficiency, making it well suited for real-time educational use.

Experimental results demonstrate that gesture-based interaction significantly enhances student engagement and learning outcomes. Compared to traditional input methods, students completed tasks 21.5% faster, achieved higher problem-solving accuracy (85.3% vs. 72.5%), and showed significant learning gains (p < 0.01, Cohen’s d = 1.45). The system’s ability to operate efficiently on moderate hardware further supports its scalability and cost-effectiveness as an alternative to VR-based solutions.

Future work will focus on improving gesture recognition in multi-user environments, enhancing robustness under varying conditions, and incorporating adaptive AI techniques for personalized learning. Extending the framework to additional STEM disciplines will further evaluate the role of AI-driven HCI in improving accessibility and learning outcomes across virtual education environments.

_hand_recognition_using_yol.png)

_hand_keypoint_landmarks__(b)_sampl.png)

_hand_recognition_using_yol.png)

_hand_keypoint_landmarks__(b)_sampl.png)