1. INTRODUCTION

Introductory computer science service courses are often required for non-computer science majors and are widely recognized as intimidating. Students with little or no prior programming experience may feel disadvantaged compared to peers who already have coding backgrounds, a dynamic that can be especially discouraging for underrepresented groups in engineering (Sloan & Troy, 2008). These challenges contribute to lower satisfaction, reduced retention, and higher rates of course withdrawal.

Traditional grading practices, which rely on weighted averages across assignments and exams, often fail to reflect true mastery of learning objectives (Hochbein & Pollio, 2016; Ritter et al., 2016). A student may earn a high course grade while still lacking competence in critical skills, or conversely, see overall performance penalized by a single poor result. Such structures can obscure both strengths and weaknesses, limiting opportunities for targeted improvement.

To address these issues, this study explores a combined framework of competency-based grading and assignment choice. Competency-based grading emphasizes mastery of defined learning objectives, while assignment choice allows students to pursue pathways that align with their interests and strengths. Grounded in Self-Determination Theory (Ryan & Deci, 2017), this framework is designed to promote autonomy, competence, and belonging—factors that prior research links to improved motivation and persistence.

Using a mixed-methods design, we compare two parallel sections of the same CS1 course: one taught traditionally and one using assignment choice with competency-based grading. Quantitative outcomes include grade distributions and D/F/withdrawal (DFQ) rates, while qualitative data from student feedback provides context for satisfaction and engagement.

This study contributes new evidence to the literature by examining the combined effect of assignment choice and competency-based grading in a CS1 service course for non-majors. Our findings highlight both the potential and the practical considerations of implementing this framework in service-level computing education.

2. LITERATURE REVIEW

This study builds on a systematic review conducted using PRISMA guidelines (Preferred Reporting Items for Systematic Reviews and Meta-Analyses). The process began with 304 studies, reduced to 19 after screening for duplicates, relevance, and methodological rigor. These studies provide evidence on competency-based grading, student retention in STEM, and assignment choice. However, no prior work directly examines the combined impact of competency-based grading and assignment choice in a computer science (CS) service course for non-majors.

2.1. Competency-Based Education in STEM

Competency-based approaches emphasize mastery of well-defined learning objectives rather than accumulation of points across categories. Studies show that competency-based education can increase retention of learning outcomes and provide students with clearer pathways to success (Durand, Belacel, & Goutte, 2016; Hochbein & Pollio, 2016). Research in computing and engineering has extended this approach to define frameworks for learning outcomes and professional skills (Frezza et al., 2018; Raj et al., 2021). By ensuring students demonstrate competence in specific areas before progressing, these models reduce variability in preparation and support long-term persistence.

Recent work also highlights the importance of aligning competency-based assessment tools across courses. For example, Vargas, Muñoz, and Arancibia (2025) describe the C-A&M model, which provides standardized assessment practices to improve consistency and scalability in competency-based frameworks.

However, the additional structure required can present challenges. Instructors must design explicit competency frameworks, often increasing upfront workload (Graves, 1996). Despite this, successful implementations suggest that the payoff includes higher student achievement and clearer signals of readiness for future coursework (Loveless, 2017; Mah & Ifenthaler, 2018).

2.2. Retention, Belonging, and Equity in Introductory STEM Courses

A recurring challenge in CS1 and related service courses is the retention of non-majors, particularly from underrepresented groups. Studies in biology and engineering education demonstrate that course design strongly influences students’ sense of belonging and persistence (Eddy et al., 2014; Wilton et al., 2019). Structured interventions such as supplemental instruction (Rath et al., 2007) and outcome-based assessment (Kuruganti, Needham, & Zundel, 2012) have been shown to improve success rates for students with less prior experience.

Increased course structure has been particularly effective in reducing performance gaps (Auerbach & Schussler, 2017; Eddy & Hogan, 2014). Students from diverse backgrounds report greater confidence and engagement when courses emphasize inclusivity and scaffolded learning opportunities (Knekta et al., 2020). These findings are especially relevant in CS service courses where many students approach programming with limited prior exposure and heightened anxiety (Dawson et al., 2018).

2.3. Assignment Choice and Personalization

Beyond structure, research indicates that giving students autonomy through assignment choice can positively impact engagement, ownership, and motivation (Dabrowski & Marshall, 2018; Halstead-Nussloch et al., 2014; Hobbs et al., 2021). Choice allows students to align coursework with personal interests, reinforcing relevance and encouraging persistence. At the same time, studies caution that too much choice can overwhelm learners or lead to avoidance of challenging tasks (Iyengar & Lepper, 2000). Successful implementations balance autonomy with minimum standards, ensuring both flexibility and rigor.

2.4. Synthesis and Research Gap

The existing literature offers strong evidence that competency-based grading can improve mastery and retention, while assignment choice can increase motivation and engagement. However, these approaches have largely been studied separately. No research to date has examined the combined implementation of competency-based grading and assignment choice in a CS1 service course for non-majors. This represents a significant gap given the increasing demand for computational skills across STEM disciplines (Adams & Pruim, 2012; Sedgewick, 2019).

This study addresses that gap by evaluating the impact of combining competency-based grading with assignment choice, framed through Self-Determination Theory. Specifically, it investigates effects on student satisfaction, retention, and successful completion in an introductory computer science service course.

3. METHODS AND CONTEXT

Building an assignment choice course framework has a distinct advantage for instructors who utilize this method (Bota et al., 2000). The framework provides clear guidance, which leads to a more efficient, repeatable process. This also aids in the later refinement of the course structure. The learning objectives are broken into two groups: core requirements and those requirements that set a distinction between average, good, and excellent students. This allows for an optimal way of ensuring alignment with course learning objectives. With a framework in place, small portions of the course can be updated to achieve the learning objectives best. This framework also allows flexibility, allowing only small parts of the course outline to be adjusted or optimized.

For students, the framework gives structure to the course, clarifying what students need to accomplish and what the expectations are, and motivating them to complete the course successfully. Finally, the framework allows iterative improvement through student feedback and course evaluations.

Using a theoretical framework to aid instructors in designing a systematic course will help students progress from theoretical to more practical use of the learning objectives (Dallimore & Souza, 2002). Students understand the importance of defining goals and selecting appropriate assignments for their interests. We argue that understanding and ownership of the assignment is an essential but often overlooked prerequisite for a student’s success.

Including framework components for an instructor to use gives structure to the course design and can simplify implementing assignment choice. Here are some examples of the components needed to build a course framework: student needs, course goals and objectives, course content, materials and activities, organization of the content, and activities and evaluation (Graves, 1996). Using a framework will enhance the instructor’s ability to address all learning objectives.

Self-Determination Theory (SDT), which emphasizes intrinsic motivation, autonomy, competence, and relatedness (Ryan & Deci, 2017), is the basis for the research. We use competency-based grading to allow students to show their mastery of our subject and add assignment choice to build on student motivation. We allow students to choose assignments that align with their interests, strengths, and learning preferences. This sense of autonomy promotes a greater sense of ownership in their work. When students get to choose tasks they feel capable of completing, it boosts their confidence and belief in their abilities. We encourage students to select assignments that match their personal experiences or interests. When students feel connected to the assignment content, they are more likely to engage with the material and feel accomplished (Dabrowski & Marshall, 2018).

3.1. Research Questions

This study explores the link between student competent course completion, success, and satisfaction in a first-year Computer Science service course when competency-based grading is used, and assignment choice is added to the course structure. We seek to address the following research questions:

-

How well can adding assignment choice to a CS1 course help with student satisfaction?

-

How well can adding assignment choice to a CS1 course help with student competent course completion?

-

Does adding assignment choice help students better learn the course learning objectives?

4. RESEARCH MODEL

As an instructor for a CS service course, my classroom and students provide a natural population for this research. Between 300 and 400 students take this course each academic year. I am also a Faculty Fellow in our university’s Center for Teaching Excellence (CTE) Innovation and Design for Exploration and Analysis in Teaching Excellence (IDEATE) community. This community of faculty conducts Scholarship of Teaching and Learning (SoTL) research. This opportunity allowed me to work with the IDEATE faculty to design and validate research surveys that can be used in our classrooms.

Self-Determination Theory (SDT) is a broad framework that centers around the connections between intrinsic motives and needs inherent in human nature and the extrinsic forces acting on persons (Ryan & Deci, 2017). SDT is an organismic dialectical approach (Center for Self-Determination Theory, 2022). People are active organisms that have a wide and diverse set of experiences. This sets the stage for SDT’s ability to look at the active organism and the social context of their development to make predictions about behavior, experience, and development. SDT comprises six mini-theories (Center for Self-Determination Theory, 2022). See Appendix A. We will use these in varying levels in this research.

4.1. Competency-Based Grading

Data collection to implement and improve our competency-based grading occurred from Spring 2022 through Fall 2023. All competency-based grading data was gathered from participants who consented and were part of the CS service courses. All data was collected per the Institutional Review Board (IRB) guidelines.

Qualitative and quantitative data was collected and coded in a mixed-methods study. The data is collected through a non-graded survey in class, and the sanitized and non-consenting students’ responses are removed. See Appendix B for survey questions. These questions were also used in a class at our university’s Pharmacy school. With the help of the CTE, we could code and use the results to improve our delivery of the competency-based grading model. The questions are a mix of open-ended questions and Likert scale questions. We used MAXQDA software to code and analyze the results. With IRB approval, this data was used for implementing and improving courses that allow students to show mastery, be able to choose assignments, and work until they show competencies in the learning objectives.

4.2. Assignment Choice

Students in a Computer Science introductory course who have not chosen Computer Science as their major are the primary participants in this research. Many students come to a Computer Science course with a higher degree of anxiety when Computer Science is not their major (Dawson et al., 2018). Students also realize they need some education in this field because the increased demand for professionals outside of Computer Science with computational and technical expertise is increasing (Adams & Pruim, 2012). Another area we wanted to address was the number of students who seemed to quickly get every possible point allotted in the grading framework, including the bonus or extra credit. In contrast, other students struggled to learn the minimum.

To address this issue, the idea of assignment choice was introduced. Before introducing this concept, I wanted to examine this pedagogy’s potential benefits and pitfalls. While studies have shown that assignment choice can improve a student’s intrinsic motivation (Iyengar & Lepper, 2000), too much choice can lead to demotivating students (Brooks & Young, 2011). Another drawback is that students pick the easy assignments over the ones that help them students achieve the learning objectives of the class, which can be overcome with minimum standards for grading (Lang, 2014). Finally, increasing the workload of teaching faculty, including myself, was not desirable.

5. THE STUDY

We examined the needs of non-computer science students in CS courses, focusing on methods that could improve engagement, satisfaction, and successful completion. To address these needs, we introduced assignment choice within a competency-based grading scheme. Students were required to complete a set of core assignments to demonstrate baseline competence, while additional choice assignments allowed them to extend learning and pursue higher grades. This framework reinforced autonomy while ensuring mastery of essential course objectives.

A key principle of the grading scheme was that poor performance on a single assignment did not lower a student’s overall grade; it simply did not contribute additional points. This approach encouraged persistence and allowed students to improve over time.

5.1. The Course Map

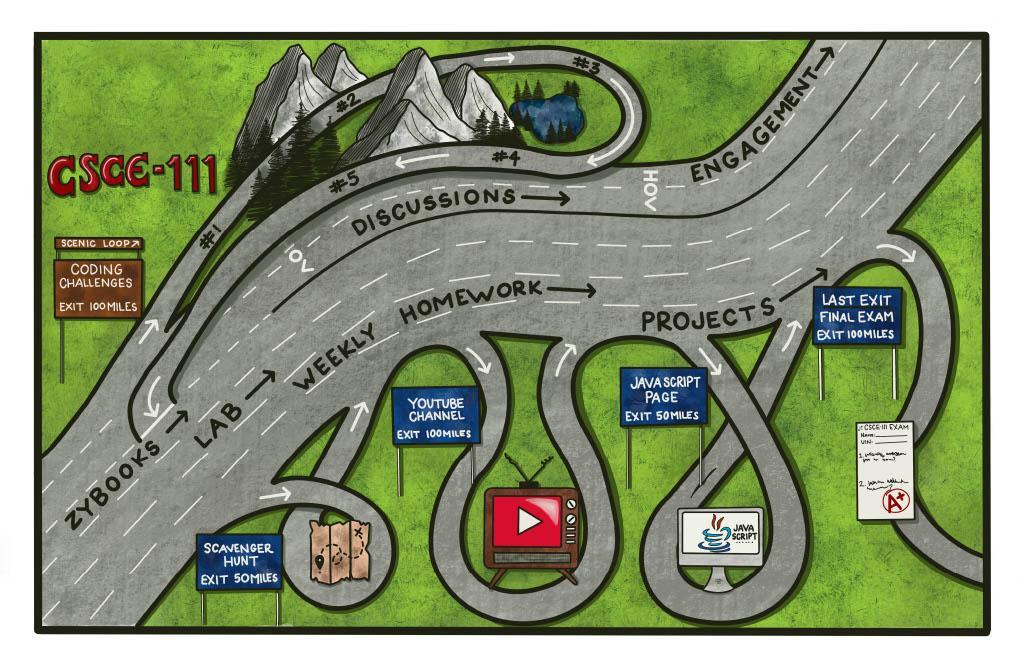

To make the framework transparent, we provided a visual Course Map (Figure 1). The “highway” lanes represented the required core assignments, completion of which earned a grade of C. Additional “side lanes” represented optional assignments that could elevate a student’s grade to a B or A. This design communicated that success required first demonstrating competence in the core areas, but students could individualize their path beyond that baseline.

5.2. The Course Calculator

Students also used a web-based course calculator to plan and track their chosen path. The calculator displayed all assignment categories, current points earned, and the grade outcome for different combinations of work. To reinforce transparency, students submitted a screenshot of their initial plan during the first week, and updated it at midterm to reflect their progress. This tool promoted goal setting and self-monitoring throughout the semester.

5.3. Methods and Validation

To measure the impact of this framework, we created a survey instrument drawing on prior work in engineering education (DeMonbrun et al., 2017). The instrument combined Likert-scale items and open-ended questions, validated using exploratory and confirmatory factor analysis. See Section 5.3.1 for details of the validation process. IRB approval was obtained for all data collection.

5.3.1. Exploratory and Confirmatory Factor Analysis

Factor analysis is frequently used in psychological and educational research for validating instruments (Hogarty et al., 2005). The benefits of factor analysis include the following and are not limited to reducing the number of variables, examining the factor structure, gathering construct validity evidence, and developing theoretical constructs (Thompson, 2004). Exploratory Factor Analysis (EFA) is exploratory in nature without expectations about the number of latent factors, whereas Confirmatory Factor Analysis (CFA) considers assumptions about the number of latent factors based on prior theory (Williams et al., 2010). Our study used a software tool named JASP for performing both EFA and CFA procedures.

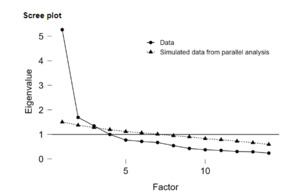

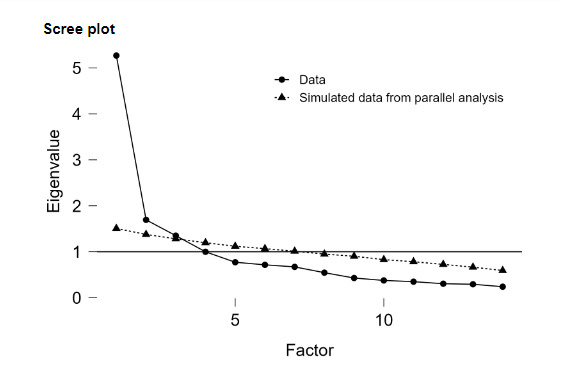

The EFA procedure requires the researcher to first determine if EFA is the most appropriate tool with respect to the research goal (Fabrigar et al., 1999). The goals of our research study include determining the underlying factors represented by the items in the survey instrument, and hence, EFA was found to be appropriate. The number and soundness of measured variables included in the study play an important role (Cattell, 1978). It is recommended that each factor be represented by a minimum of 3-5 measured variables (Fabrigar et al., 1999), and this has been satisfied in our study. Descriptive statistics, including mean, standard deviation, skew, and kurtosis, were calculated to assess the normality of the data. Kaiser-Meyer-Olkin (KMO) test and Bartlett test of sphericity were used to check the suitability of data for the EFA procedure (Field, 2009). A KMO value greater than 0.8 indicates sufficiency for sampling, and a significance value less than 0.5 for the Bartlett test of sphericity ensures the correlation matrix is not an identity matrix (Hair et al., 2019). In order to determine the number of factors, eigenvalue criteria were used in JASP software, retaining only factors that had an eigenvalue greater than one as obtained in the scree plot. See Figure 2. EFA requires the researchers to pick an appropriate extraction method and rotation. Maximum likelihood extraction is accurate for distributions that are close to normal with skewness < 2 and kurtosis < 7. Promax, an oblique rotation was utilized that allows correlations among the different factors (Hendrickson & White, 1964).

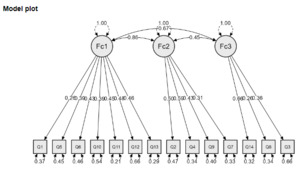

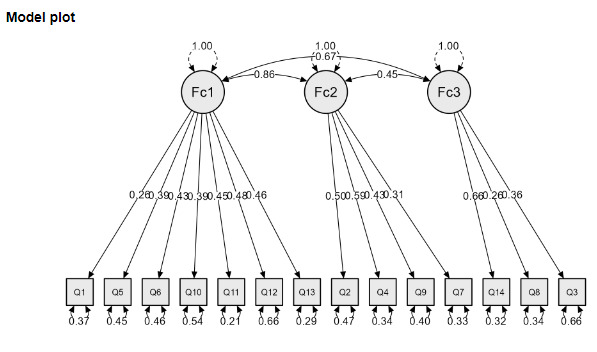

CFA helps us examine structural or factorial validity and is essential in developing psychometrically sound measures. It is often used after performing EFA to confirm if the factor structure obtained after performing EFA works on the new sample (Harrington, 2009). In our study, we used post-data collected at the end of the semester for performing CFA, and pre-data collected at the beginning of the semester was used to perform EFA. Our survey instrument has 14 items, and CFA is used in our study to confirm whether these items are related to the respective latent or unobserved variables, as per our hypothesis. Per our hypothesis, Q1, Q5, Q6, Q10, Q11, Q12, and Q13 represent the latent construct, “My understanding of coding and its use” (Factor 1), Q2, Q4, Q7, and Q9 represent the latent construct, “How confident I am that I can do this” (Factor 2), and Q3, Q8, Q14 represent the latent construct, “Value of coding to me/society” (Factor 3). This model was specified in JASP software.

5.3.2. EFA and CFA Results

In order to understand the underlying factor structure of the survey instrument, we performed EFA. The pre-data that was collected at the beginning of the semester from both sections of the course with traditional and choice assignments were used for performing EFA. A total of 185 students responded to the survey. See Table 1 for the descriptive statistics, showing the mean (M), standard deviation (SD), skewness and kurtosis. The skewness of the measured variables ranges from -1.492 to 0.461, indicating a moderate asymmetry. The highest kurtosis value for the measured variables is 3.140, indicating a peaked distribution, while the lowest value is -0.596, indicating a more flat distribution. Both the skewness and kurtosis values indicate that the Maximum Likelihood Extraction method could be utilized (Curran et al., 1996).

An overall KMO value of 0.86 indicates that the dataset used in this study is appropriate for performing EFA and identifying reliable factors. Table 2 shows the results of the three-factor analysis. Bartlett’s Test result with a chi-squared value of 1043.588, degrees of freedom (df) of 91, and significance level of less than 0.001 indicates that the variables are related and the dataset is suitable for factor analysis. A scree plot shown in Figure 2 suggested retaining three factors that had eigenvalues greater than one. The three factors can be seen in Table 2. The measurement model plot in Figure 3 shows the mapping of the questions to the factors.

In our study, we collected 169 student responses for performing CFA analysis. The measurement model plot is seen in Figure 3. Factor 1, Factor 2, and Factor 3 loadings range from 0.261 to 0.481, 0.310 to 0.588, and 0.259 to 0.660, respectively. Factor loading values close to 1 indicate a strong relationship between the item and the latent construct, while values close to zero indicate a weak relationship. The fit indices such as CFI = 0.83, TLI = 0.80, SRMR = 0.069, and RMSEA =0.08 were obtained for our model. CFI and TLI values close to 0.09 indicate a good fit, and our values are a little less than the good fit range (Kim et al., 2016). RMSEA values between 0.08 and 1 fall within the marginal fit range, while values greater than indicate poor fit; our RMSEA value indicates marginal fit. SRMR values less than 0.08 indicate a good fit, and hence, our value of 0.07 indicates a good fit. Overall, our fit indices indicate a reasonable model (Hu & Bentler, 1999). Note that the Lavaan package was used, and the Maximum Likelihood estimator was used.

All data collection followed Institutional Review Board (IRB) protocols. The Human Research Protection Program determined both the competency-based grading and satisfaction/retention studies met the criteria for Exemption (IRB2020-0629; IRB2023-0053M).

5.4. The Course Setup

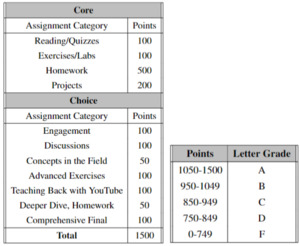

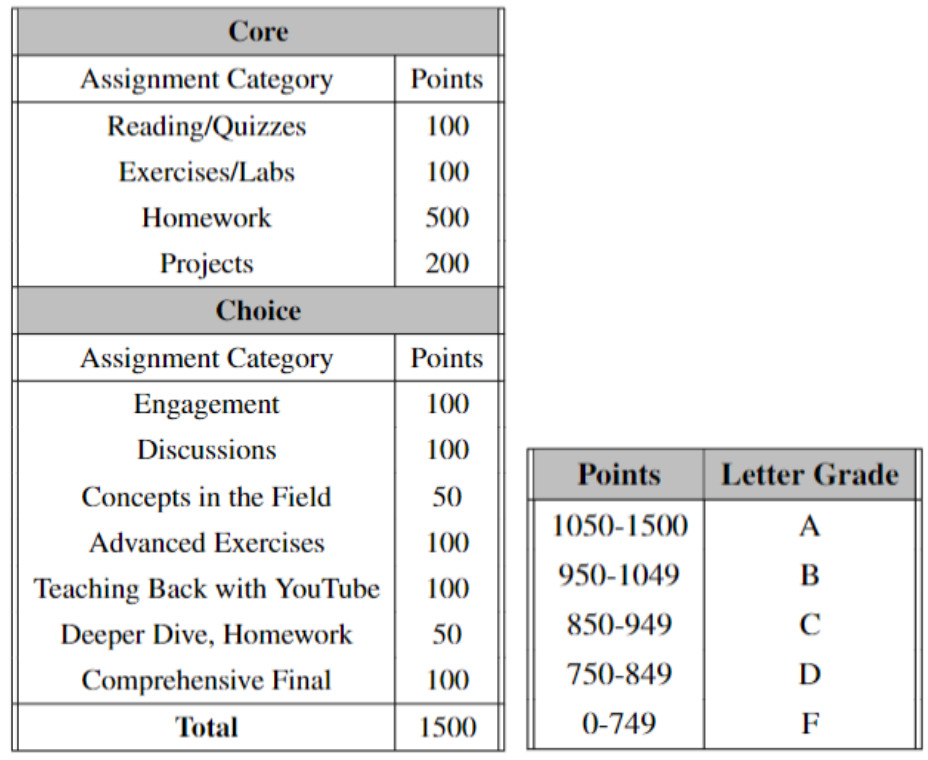

The course framework combined competency-based grading with assignment choice. Four required core categories—labs, homework, projects, and textbook quizzes—had to be completed at a 70% threshold to earn a passing grade of C or higher. This ensured all students demonstrated competence in the essential learning objectives.

Beyond this baseline, seven optional pathways provided opportunities to extend learning and improve grades. These included engagement and participation, coding puzzles, weekly discussions, reteaching concepts through YouTube videos, extending prior assignments (e.g., adding JavaScript), a technology scavenger hunt, and an optional final exam. Each pathway appealed to different learning preferences and gave students multiple ways to demonstrate mastery.

Figure 4 illustrates the overall course framework, showing the balance of required and optional assignments and the distribution of points. Importantly, while the traditional course required 900 points for an A, the assignment choice framework required 1050 points out of 1500, raising expectations while offering students flexible paths to success.

6. RESULTS

We wanted to determine if grade distributions were lower with traditional methods, so our next investigation extends beyond a single CS-1 course, delving into the past five years of teaching three distinct service courses. With a dataset encompassing over 4000 students and five different professors, we considered whether any combination of course offerings or instructor dynamics correlates with enhanced grade distributions. The analysis examined traditional versus assignment choice classes. It showed that the Grade Point Average (GPA) for the assignment choice class was tightly centered, just above 3.75, while the traditional methods had a wider range and a GPA of around 3.4. While this gives more evidence that grades can be improved with our method, it does not consider the differences in professors.

6.1. The Experiment

Establishing a more formal study examining the effects of assignment choice and competency-based grading was essential. By conducting a comprehensive investigation, we gathered a more extensive dataset, analyzed it rigorously, and drew conclusions regarding the efficacy of these approaches. Our study encompassed a larger sample size, including both experimental and control groups, to facilitate a more comprehensive comparison of outcomes. To achieve this, we built a control group study in which two separate classes, each consisting of 100 students, were conducted consecutively in the same semester by the same professor and teaching assistants. The 9:10 a.m. class was the control group and followed traditional grading methods. The 10:20 a.m. class was the experimental group, and the novel methodology of competency-based grading was implemented with assignment choice. By maintaining consistency in the instructional team and course content, we sought to minimize confounding variables and focus squarely on the impact of the new approach on student outcomes. At the end of the semester, we collected and analyzed data related to student performance, retention rates, and satisfaction levels in both groups. All students signed up for CSCE 111 based on their need for the class. They chose the time that fit their schedule. No knowledge of any difference in the classes was available to the students when they registered.

6.1.1. The Control Group

In the control group, the initial phase of the course started with an introduction session, during which the syllabus and grading criteria were reviewed and discussed with the students. The grading system adhered to traditional principles, where the course grade was structured around a set of assignments, each individually weighted to contribute to the final grade, which totaled 100%. Ensuring alignment with the course’s learning objectives, all assignments were outlined and made available to students in Canvas, our Learning Management System (LMS). The assignment types included the following activities: Reading and Quizzes to gauge comprehension, hands-on Coding Assignments to apply theoretical knowledge, practical Lab Assignments to reinforce skills, collaborative Group Projects to foster teamwork, Engagement activities to promote active participation, and Discussions to encourage critical thinking and peer interaction. All students in the control group were required to complete the same set of assignments, ensuring consistency and comparability across the class. Furthermore, the grading scale was specific, with a standard grading scale of 90% or higher warranted an A, 80% or higher merited a B, and so forth, providing students with a transparent understanding of performance expectations and evaluation criteria.

6.1.2. The Experimental Group

In the experimental group, the initial phase of the course started with an introduction session, during which the syllabus and grading criteria were reviewed and discussed with the students. The grading system adhered to our assignment choice framework, where the course grade was structured around a set of core assignments broken into four categories, each with individual point totals that could contribute to the final grade. Successful completion of the core assignments, a 70% or above in each category, was required to earn a C or higher in the course. Seven categories were made available for the students to choose from to provide assignment choice.

The core assignment types included four of the categories in the traditional course: Reading and Quizzes to gauge comprehension (100 points), hands-on Coding Assignments to apply theoretical knowledge (500 points), practical Lab Assignments to reinforce skills (100 points), and collaborative Group Projects to foster teamwork (200 points). This represents 900 of the 1500 available points in the course, and referring back to Figure 4, this would amount to a C in the course.

The choice assignments include two categories from the traditional course offering: Engagement Activities to promote active participation (100 points) and Discussions to encourage critical thinking and peer interaction (100 points). Each of these assignment categories are weekly assignments that span the entire semester. Then, we created additional assignments to enhance the assignment offerings. They are the Technology Scavenger Hunt (50 points): In this assignment, students embark on a scavenger hunt to explore various resources, materials, or concepts related to the course content. It prompts students to seek out information independently. Points are awarded based on completing tasks or objectives outlined in the scavenger hunt, promoting a sense of discovery and curiosity. YouTube Channel (100 points): The YouTube Channel assignment requires students to create educational content related to the course topics. Students may produce videos, tutorials, or presentations demonstrating their understanding of key concepts and their ability to communicate them effectively. This assignment fosters creativity, multimedia skills, and the ability to present complex ideas in an understandable format. Coding Puzzles (100 points): In the Coding Puzzles assignment, students are challenged to solve a series of programming problems or puzzles using the coding skills and knowledge acquired in the course. These puzzles may require logical thinking, problem-solving, and application of programming concepts. By engaging in hands-on coding activities, students deepen their understanding of coding principles and enhance their problem-solving abilities. JavaScript Page (50 points): The JavaScript Page assignment tasks students with adding to their web page using JavaScript to implement interactive features or functionality. This assignment allows students to learn basic JavaScript programming skills. It encourages creativity while reinforcing concepts learned in the course. Optional Final Exam (100 points): The Optional Final Exam allows students to demonstrate their mastery of the course material through a comprehensive assessment. Unlike traditional exams, this assignment is optional, allowing students to choose whether to participate based on their learning preferences and goals. Students who opt to take the final exam can earn additional points toward their final grade, providing an incentive for those seeking to challenge themselves and showcase their knowledge.

We explain all the assignments in the core and choice categories, including the need to earn 70% before any points are added to the total, encouraging at least a basic understanding of that topic. Next, we have students use the course calculator to understand better what it takes to get the grade they want in this class. The choice assignments add up to 600 points, giving the course a total of 1500 points. Again, referring to Figure 4, only 1050 points are needed for an A, allowing students to pick from the assignments they want to do most.

6.2. The Outcome

6.2.1. The Beginning of the Semester

At the beginning of the semester, all students were given the survey we created for this class. Our first goal was to determine if the two classes were statistically from the same population of non-CS major students regarding their CS proficiency and comfort. Also, to check the sanity of our methods, we wanted to verify that the 14 TAs (Teacher Assistants) were statistically different from the students taking the class. With our sample size of 200 students and how we generalized our data, we decided to use the Wilcoxon rank-sum test (Perolat et al., 2015; Rosner & Glynn, 2009).

Using the Wilcoxon rank-sum test, our null hypothesis was “The two populations are the same.” With each population being the students of one of the classes. Our results showed that we are 99% confident there were no significant differences between the two classes, with a p-value = 0.35. When using the same test, with the class as one population and the group of TAs as the other, our results showed strong evidence, with a p-value < 2.2e-16, that we should reject the null value “The two populations are the same.” This made sense as these TAs were very comfortable with CS, the course, and their ability to help students succeed. The TAs were recruited from those students who had done well in the class before or were more advanced CS majors.

6.2.2. The End of the Semester

At the end of the semester, all students were given the same survey we created for this class. We aimed to determine if the two classes fared differently in their confidence in this class. Plotting the raw data, we could see that both classes ended the class with higher confidence and satisfaction in their abilities. Only one question, “I believe coding skills are valuable in various career paths,” remained constant from the beginning to the end of class, with an average score of 4.5 on the 5-point Likert scale.

From the factor analysis and grouping of our survey questions, Factors 2 and 3, while improving, remained the same. Factor 2 is a grouping of questions that deals with the student’s confidence in coding. Factor 3 is a grouping of questions that deals with the students’ view of the value of coding to themselves and society.

Factor 1 is a grouping of questions that deal with the student’s understanding of code and its use. For Factor 1, we had a 1 to 2-point increase in the Likert scale value for the class with choice versus the class taught in the traditional style in five of the seven questions that made up the group. Using the median as the input for the Wilcoxon rank-sum test lets us see the increases more clearly (Lin et al., 2021). See Table 3 for the questions with a statistical difference in the answers of the two classes. A zero for the question means no statistical difference between the two classes, which was the case for nine out of fourteen questions. A one or two indicates that the choice class was one or two points higher on the Likert scale at the end of the class.

All of the improvements came from Factor 1 in the survey instrument for this study. Factor 1 is the set of questions that deal with “My understanding of coding and its use.” The following questions are the specific areas in the assignment choice class that fared better:

Question 1: I have a basic understanding of what coding is.

Question 5: I have experience with any programming languages.

Question 10: I am satisfied with my coding abilities.

Question 11: I have a clear understanding of how coding languages work.

Question 13: I am confident in my ability to write clear and organized code.

Table 3 also shows that question 10 fared the best, with 2 points higher than the control group.

At the end of the semester, the control group had changed, and we had lower confidence that the population would be the same. Our analysis determined that the experimental group remained constant, with only four students dropping, while the control group had nine drops. The distribution of the students who dropped from the control group was more weighted towards the less confident students, making the ones who stayed slightly higher in incoming confidence. The distribution of the students that dropped the experimental group, while lower, was more evenly distributed. This further supports our hypothesis that assignment choice enhances student satisfaction and retention.

One of our main motivations in researching this topic was the high rate of students earning a D, F, or withdrawing from the class (DFQ rate). This can delay a student’s graduation or perhaps make them change majors without successfully completing a required course. Our observational data has shown that the traditionally taught classes averaged a 25% DFQ rate over the previous five years, while adding choice to the class decreased the DFQ rate to 10%. Without a control and experimental class being taught simultaneously and by the same professor over a population of 200 students, we could not account for the differences in assignments, TAs, or even the professors themselves. With this study, the control group, taught by the traditional method, achieved an 18% DFQ rate, which is still high. With the experimental group being taught with assignment choice, the DFQ rate was 11%, which is much more acceptable.

This reduction represents a relative risk reduction of 39%, meaning students in the assignment choice section were substantially less likely to drop, fail, or withdraw from the course. While this difference did not reach conventional thresholds for statistical significance, the magnitude of reduction is practically important for course planning and retention initiatives.

In addition to quantitative gains, qualitative feedback reinforced these findings. Several students expressed appreciation for the flexibility of assignment choice and reported that it reduced stress compared to peers in the traditional section. For example, one student wrote, “My roommate was in the other section without choices, and she felt stuck. I liked that I could pick assignments that fit my schedule and interests—it kept me motivated.” Another remarked, “Knowing I wasn’t locked into one path made me feel more in control of my grade.” One student even said, “I was in the unfun class.” I asked her what that meant, and she said she didn’t get to pick assignments.

6.3. Answering the Research Questions

6.3.1. RQ1

How well can adding assignment choice to a CS1 course help with student satisfaction?

Students in the assignment choice group reported significantly higher satisfaction on several survey items, with 5 out of 14 questions showing statistically significant improvement compared to the control group. Notably, students expressed greater confidence in their coding abilities, indicating enhanced self-efficacy and a more positive overall learning experience. Cohen’s d values of 0.32–0.48 confirm small-to-moderate practical significance.

6.3.2. RQ2

How well can adding assignment choice to a CS1 course help with student competent course completion?

The DFQ rate dropped from 18% in the traditional section to 11% in the assignment choice section, indicating a meaningful—though not statistically significant—improvement in competent course completion. This suggests that assignment choice may help more students successfully progress in their studies, reducing setbacks such as course repetition or delayed graduation. Reporting effect size provides additional context: students in the assignment choice section were approximately 1.8 times more likely to successfully complete the course compared to those in the traditional section.

6.3.3. RQ3

Does adding assignment choice help students better learn the course learning objectives?

While the GPA difference between sections was not statistically significant (3.3 vs. 3.5), the assignment choice group had a higher percentage of A and B grades (85% vs. 73%) and fewer low grades, suggesting that more students achieved course learning objectives at a higher level. These results imply that assignment choice can support improved mastery without compromising academic rigor.

Not only does this show promise in student satisfaction, retention in the course, and a higher level of course mastery, but it also starts to fill the gap in research that combines Assignment Choice with Competency-Based Grading to improve course outcomes.

7. DISCUSSION

This study, with 200 students, 14 TAs, one professor, and two lecture sections of an introductory CS class, provided the perfect environment for a control and experimental group design. One section followed traditional grading, while the other incorporated assignment choice with competency-based grading. To evaluate satisfaction and confidence in coding abilities, we developed and validated a survey instrument administered to both groups at the beginning and end of the semester.

At the start of the course, statistical testing showed no significant difference between the two groups (p = 0.35), confirming that the control and experimental sections represented the same underlying student population in terms of initial CS proficiency and comfort. At the end of the course, the p-value rose to 0.52, again well above the 0.05 threshold. In practical terms, this means the two groups remained statistically indistinguishable populations, reinforcing that the intervention did not create systematic differences between them. Both groups improved, but the assignment choice section showed stronger gains in confidence and satisfaction, as detailed in the Results section.

We also observed a lower DFQ rate in the experimental group, suggesting that assignment choice helps a wider range of students remain in the course through completion. This is particularly important in service-level CS courses where retention challenges are common.

Qualitative feedback from students provides additional insight into these findings. Many compared their experiences with peers in the traditional section, noting that assignment choice fostered a greater sense of autonomy and reduced stress. As one student explained, “My roommate was in the other section without choices, and she felt stuck. I liked that I could pick assignments that fit my schedule and interests—it kept me motivated.” Another reflected, “I talked to friends in the other class, and they seemed way more stressed. I felt like I had options to show what I learned instead of just hoping I did well on the same assignments as everyone else.” These comments highlight how assignment choice supported the sense of autonomy and competence described by Self-Determination Theory. Similar goals have been pursued in related interventions. For instance, Johnson et al. (2024) reported that RadGrad, a curricular planning and engagement tool, improved retention and diversity in computer science by increasing autonomy and student ownership. While RadGrad emphasizes degree planning, our approach embeds autonomy directly into course-level grading and assignment design.

Together, the quantitative and qualitative results suggest that combining assignment choice with competency-based grading not only improved satisfaction and retention but also fostered a more positive classroom climate where students felt ownership of their learning.

7.1. Limitations and Scalability

While this study provides promising evidence for the use of assignment choice and competency-based grading in a CS1 service course, several limitations should be noted. First, the research was conducted at a single institution in two sections of the same course taught during one semester. Although this design controlled for many confounding factors, it also constrains generalizability. Findings may not fully extend to other institutions, larger class sizes, or courses with different student demographics.

Second, the novelty of assignment choice may have contributed to initial gains in satisfaction and motivation. Future research should examine whether the benefits persist across multiple semesters once the approach is no longer new to students. Third, while students selected their course sections without knowledge of instructional differences, the possibility of self-selection bias in class timing cannot be entirely dismissed.

Finally, this study focused on a service-level CS1 course for non-majors. Outcomes may differ in upper-level courses or in programs where students have chosen computing as their primary field of study.

In terms of scalability, the framework is designed to be adaptable, but successful adoption requires thoughtful planning. Initial implementation involves additional workload for instructors in creating alternative assignments, designing a course map, and developing supporting tools such as the course calculator. Faculty perspectives are critical to the feasibility of scaling competency-based approaches. Townsley and Schmid (2022) found that grading practices and instructor buy-in can either accelerate or hinder adoption, underscoring the need for institutional support during implementation. However, once these resources are developed, the system can be reused with minimal additional effort in future semesters. Faculty workload is thus front-loaded rather than ongoing. Scalability is further enhanced by the flexibility of the framework, which allows instructors to tailor the number and type of choice assignments to available resources.

Overall, while limitations must be acknowledged, the results suggest that assignment choice combined with competency-based grading can be a feasible and scalable strategy for improving satisfaction, retention, and student success in service courses.

8. CONCLUSION

This study demonstrates that combining competency-based grading with assignment choice can meaningfully improve outcomes in a computer science service course. Students in the assignment choice section reported higher satisfaction, greater confidence, and stronger perceived understanding of coding. These gains were supported by small-to-moderate effect sizes (Cohen’s d = 0.32–0.48 for key survey items), suggesting practical significance beyond statistical results. Retention improved as well: the DFQ rate declined from 18% in the traditional section to 11% in the assignment choice section, representing a 39% relative risk reduction and an odds ratio of 1.8 for course completion.

Importantly, qualitative feedback illustrated why students valued the framework. Many compared their experiences with peers in the traditional section, emphasizing that choice gave them greater autonomy, reduced stress, and allowed them to demonstrate learning in ways that felt authentic. This aligns with Self-Determination Theory, which highlights autonomy and competence as drivers of motivation and persistence.

While promising, these results must be considered in light of study limitations. Findings are drawn from a single institution, in two sections of the same course, and may not generalize to other contexts or upper-level courses. Some improvements may reflect a novelty effect, and students self-selected into class times. Still, the framework is scalable: although initial implementation requires effort in creating alternative assignments and support tools, the workload is front-loaded, and resources can be reused across semesters.

Overall, the evidence suggests that assignment choice combined with competency-based grading provides a feasible, student-centered strategy for improving satisfaction, retention, and mastery in service-level computing courses. By balancing rigor with autonomy, this approach offers a model that other instructors may adapt to support diverse learners in introductory STEM contexts.